A recent study found that people older than 65 are more likely to share fake news on social media (read a more accessible account of the study as covered in The Verge). We live in a very confusing information environment. Most people, especially those over 65, gained their education and experience in a completely different world that did not prepare them for the information chaos the Internet has become. Information literacy must have been very different 50 years ago.

There are a few things we take for granted in academia – one of them is how knowledge is produced and validated. That was, actually, one of my favorite topics to teach. I always opened courses on research methods (most recently, the PhD level seminar on Qualitative Research Methods I used to teach at Purdue), with the following questions: What is knowledge? Where does knowledge come from? Who decides what counts as (credible) knowledge?

What do I mean by knowledge? Things like the opening statement of this post – that people older than 65 are more likely to share fake news; nutrition/medical information, such as X is good for you, or what are the causes and effects of high cholesterol; theories that explain human behavior; principles that prescribe how computers should behave; and so on.

In academia, we have a system, however flawed, for creating and validating knowledge. Let’s call it the knowledge pipeline. The goal of this post is to explain this knowledge pipeline to people outside academia, in hopes that it will help them determine what is credible knowledge.

Like most non-academic explanations, this one will be over-simplified. Roughly speaking, the academic pipeline looks like this:

- Knowledge production – one or more experts (the researchers) conduct a study that results in some insights (knowledge). Once the study is written up in a paper, the researchers submit it to an academic journal or conference for review and publication.

- Review – a team of experts (the reviewers), not associated with the study, review it for credibility and validity and make a recommendation whether the study is good enough to be published or not. If not, the study goes back to the researchers for improvement, and several rounds of steps 1 & 2 occur. Sometimes the study is abandoned and it doesn’t proceed to the next phase.

- Publication in an academic journal or conference.

Now, let’s look at each step more closely and see what questions we can ask that can help us determine whether we should believe this knowledge or not.

1. Knowledge production

If the research team is employed by a university or independent research center, they are more likely to have sincere intentions. If the researchers are employed by a cigarette company and their study finds that cigarettes are not harmful to health, we have reason to be skeptical of the findings.

Question 1a: What is the researchers’ affiliation? Who do they work for?

Even for researchers affiliated with universities, we might want to ask – what university? Not all universities are created equal. Some universities’ research programs are stronger than others. This is why many news media articles that cover research mention the researchers’ affiliations. For example:

Older Americans are disproportionately more likely to share fake news on Facebook, according to a new analysis by researchers at New York and Princeton Universities.

source

Let’s say the research originates from a reputable university. Even then, we might ask, who funded the research? Conducting research is expensive. It takes time, equipment, staff. Someone has to pay for it. Many, but not all, research studies are funded from a source external to the university. In many countries, there are government agencies that fund research, but also, corporations, non-profit organizations, and other associations might provide money to fund the work. In theory, university researchers do not allow the source of funding to influence their research results. In practice, that is sometimes difficult.

Published research articles include an acknowledgment disclosing the source of funding (if any). For example, the study on fake news dissemination includes the following acknowledgment:

Funding: This research was supported by the INSPIRE program of the NSF (Award SES-1248077).

source

“NSF” is an abbreviation for the National Science Foundation, one of the primary agencies of the US federal government that funds research.

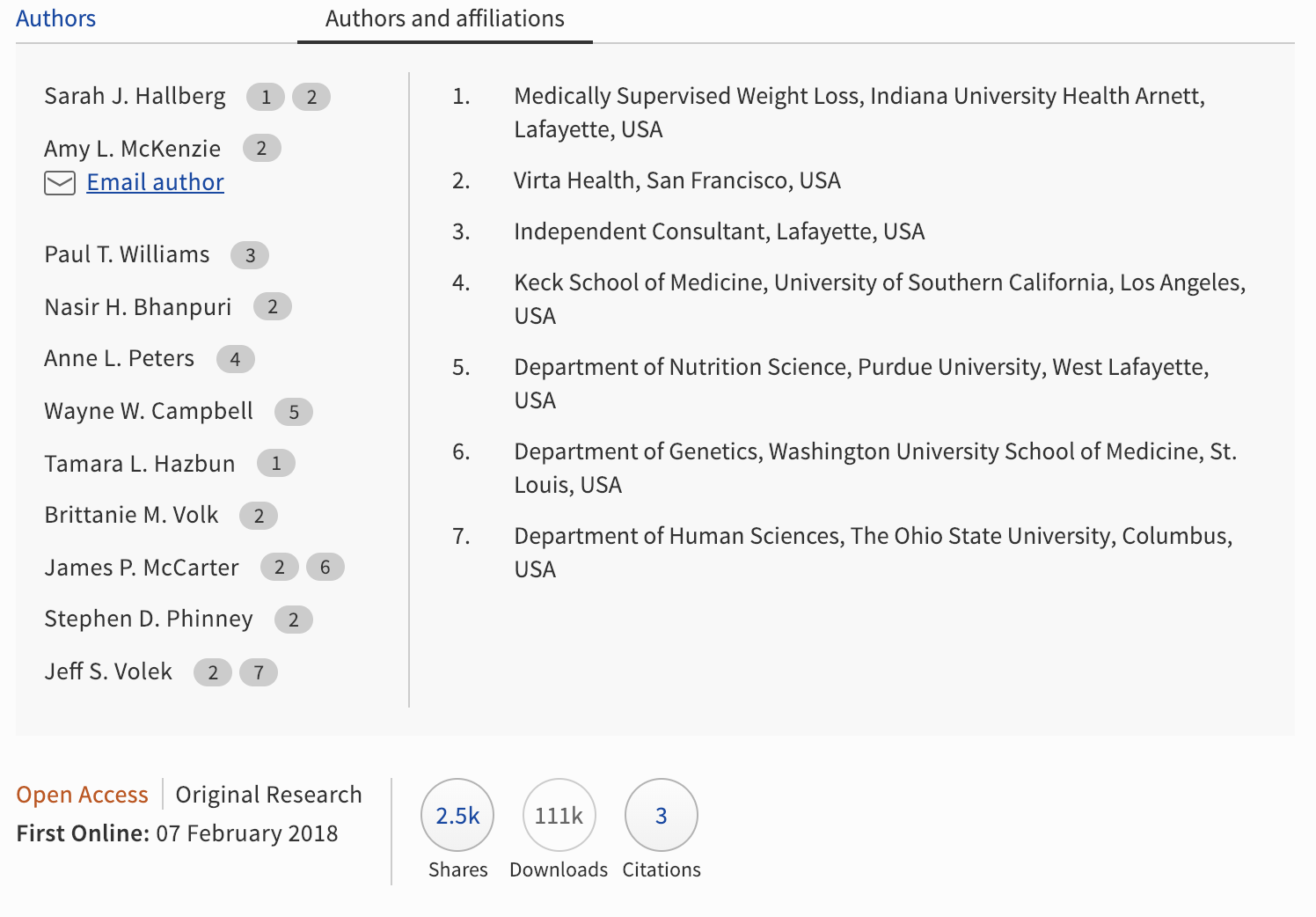

This other study, on the benefits of carbohydrate restriction on diabetes, was funded by a startup that sells ketogenic diet services for $370/month. That raises a red flag. But we can investigate it further. Let’s examine the authors’ affiliations:

The primary authors, 1-3 are affiliated with the startup, with a weight loss organization, or with… no one. Authors 4-7 are affiliated with reputable universities and lend credibility to this study. So, this is a mixed bag. The study only has 3 citations, and it was published one year ago. All of these signals combined, without getting into question 1b, raise some questions about the study’s credibility.

Question 1b: Was the research conducted well?

This question is hard to answer without specialized knowledge in research methods. This is why we need independent experts to review the study before it sees the light of day. Which brings me to the next phase in the academic pipeline:

2. Review

There are debates in the academic world about this, but for a long time the gold standard for reviewing research has been the double-blind peer review. What does this mean?

Double-blind – means that the reviewers do not know who the authors are, and the authors do not know who the reviewers are. The review process is anonymized at both ends. This is meant to ensure that the work is reviewed purely on its merits.

Peer – means that the reviewers are experts, and they are in the same field as the researchers. Most of us researchers are also reviewers. For example, I submitted 2 papers to the conference CHI 2019, but I also reviewed a number of papers that were within my area of expertise.

We are skeptical of “scientific knowledge” that is not peer reviewed. There are a lot of “experts” out there who publish their own insights. Writing this blog, for example, is a form of self-publishing. So you should make a decision whether you want to believe this very post. Who is this author, Dr. V? How come she knows about this? What is her experience? What are her credentials? Who are her employers? Did anyone pay her to write this? If so, who? Of course, this post is not a form of research in any way.

3. Publication

The journal or conference where the research is published matters.

But first, is the work published in a journal, conference or book, or is it self-published? If self-published, be very careful about believing it unless you have the necessary expertise to evaluate it. Self-published work, like this blog post, has not undergone phase 2, Review, and therefore you have to play the role of the reviewer.

This is one of the main reasons why we kept the Guidelines for Human-AI Interaction secret until we heard that they were accepted at the conference CHI 2019. We wanted the credibility of steps 2 and 3 and wanted to avoid self-publishing.

Now, let’s assume the work was published in an academic journal or conference. Well, which one? There are many fake journals and conferences where authors pay to publish (self-publishing, again).

Once again, we ask: who is behind this journal/conference? Most reputable journals are published by professional associations, such as ACM, IEEE, the National Communication Association, etc. These associations are comprised of researchers and scientists who work together to advance an academic discipline. Other factors we take into consideration when assessing a journal/conference are:

- publisher – is the journal published by a well-known academic publisher (e.g., Elsevier, Springer Verlag, Sage, Taylor & Francis)? This is not a guarantee of quality, but it is a good sign.

- editorial board – who are the editors and the reviewers, and what are their affiliations? Do they work at reputable research institutions?

- what is the review process, and what is the acceptance rate? Does this journal accept 90% of what’s submitted? In this case, it’s very close to self-publishing. For example, Science Magazine accepts less than 7% of what’s submitted.

- impact factor is another (controversial) measure we might look at when assessing a journal’s credibility.

Another question you might wonder about is, who organizes and maintains this academic knowledge production system? Who is behind it? For the most part, this system is self-governed. We, researchers and scientists, gather at meetings and conventions, and have associations, where we debate how the system should work. The science of doing science is also part of what we work on. We research the very methods we use to create knowledge, and continuously seek to improve them. We disagree, we debate, and in the process, we hold each other accountable. We are skeptical of our own system and are currently debating, for example, whether blind peer review is realistic or useful.

I hope this not-so-short explanation of where knowledge comes from helps you a bit in navigating our complex information environment. I recognize that most people do not read academic journals and conference papers. The knowledge is disseminated to the public through articles in the news media, “expert” videos on YouTube, and blog posts such as this one. All of that is second-hand information (and we’ll tackle it in a future post). When you encounter second-hand information, maybe you remember to ask yourself: Where does this knowledge actually come from?

I’ve written before my advice on

I’ve written before my advice on